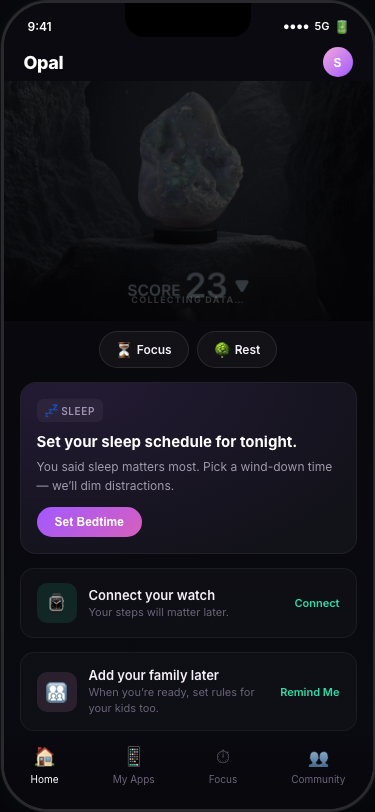

Opal Home Journey

A React prototype of the core Opal V4 feed, centered around the actual "Sally" research persona (a stay-at-home mom focused on family screen-time governance and sleep).

We discarded the abstract 14-day narrative format from v1 because it read like a case study. We rebuilt the UI to look and act directly like Sally's actual phone screen, featuring a primary bedtime-focused suggestion card and supporting widgets (Focus Report, Insights, Family usage) matched to the latest Figma designs.